Authors:

(1) Zhihang Ren, University of California, Berkeley and these authors contributed equally to this work (Email: peter.zhren@berkeley.edu);

(2) Jefferson Ortega, University of California, Berkeley and these authors contributed equally to this work (Email: jefferson_ortega@berkeley.edu);

(3) Yifan Wang, University of California, Berkeley and these authors contributed equally to this work (Email: wyf020803@berkeley.edu);

(4) Zhimin Chen, University of California, Berkeley (Email: zhimin@berkeley.edu);

(5) Yunhui Guo, University of Texas at Dallas (Email: yunhui.guo@utdallas.edu);

(6) Stella X. Yu, University of California, Berkeley and University of Michigan, Ann Arbor (Email: stellayu@umich.edu);

(7) David Whitney, University of California, Berkeley (Email: dwhitney@berkeley.edu).

Table of Links

- Abstract and Intro

- Related Wok

- VEATIC Dataset

- Experiments

- Discussion

- Conclusion

- More About Stimuli

- Annotation Details

- Outlier Processing

- Subject Agreement Across Videos

- Familiarity and Enjoyment Ratings and References

Abstract

Human affect recognition has been a significant topic in psychophysics and computer vision. However, the currently published datasets have many limitations. For example, most datasets contain frames that contain only information about facial expressions. Due to the limitations of previous datasets, it is very hard to either understand the mechanisms for affect recognition of humans or generalize well on common cases for computer vision models trained on those datasets. In this work, we introduce a brand new large dataset, the Video-based Emotion and Affect Tracking in Context Dataset (VEATIC), that can conquer the limitations of the previous datasets. VEATIC has 124 video clips from Hollywood movies, documentaries, and home videos with continuous valence and arousal ratings of each frame via real-time annotation. Along with the dataset, we propose a new computer vision task to infer the affect of the selected character via both context and character information in each video frame. Additionally, we propose a simple model to benchmark this new computer vision task. We also compare the performance of the pretrained model using our dataset with other similar datasets. Experiments show the competing results of our pretrained model via VEATIC, indicating the generalizability of VEATIC. Our dataset is available at https://veatic.github.io.

1. Introduction

Recognizing human affect is of vital importance in our daily life. We can infer people’s feelings and predict their subsequent reactions based on their facial expressions, interactions with other people, and the context of the scene. It is an invaluable part of our communication. Thus, many studies are devoted to understanding the mechanism of affect recognition. With the emergence of Artificial Intelligence (AI), many studies have also proposed algorithms to automatically perceive and interpret human affect, with the potential implication that systems like robots and virtual humans may interact with people in a naturalistic way.

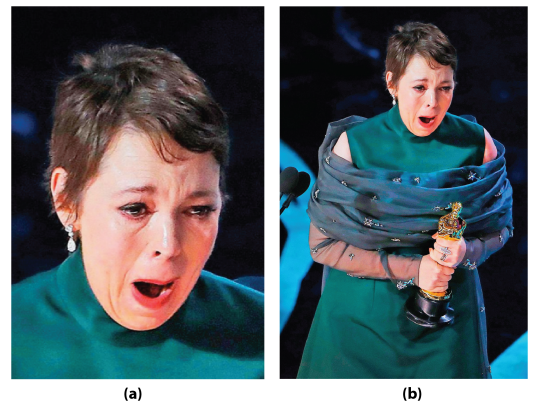

When tasked with emotion recognition in the real world, humans have access to much more information than just facial expressions. Despite this, many studies that investigate emotion recognition often use static stimuli of facial expressions that are isolated from context, especially in assessments of psychological disorders [3, 18] and in computer vision models [60, 62]. Additionally, while previous studies continue to investigate the process by which humans perceive emotion, many of these studies fail to probe how emotion recognition is influenced by contextual factors like the visual scene, background information, body movements, other faces, and even our beliefs, desires, and conceptual processing [4, 34, 8, 42, 44]. Interestingly, visual contextual information has been found to be automatically and effortlessly integrated with facial expressions [2]. It can also override facial cues during emotional judgments [26](Figure 1), and can even influence emotion perception at the early stages of visual processing [7]. In fact, contextual information is often just as valuable to understand a person’s emotion as the face itself [8, 9, 10]. The growing evidence of the importance of contextual information in emotion recognition [4] demands that researchers reevaluate the experimental paradigms in which they investigate human emotion recognition. For example, to better understand the mechanisms and processes that lead to human emotion recognition during everyday social interactions, the generalizability of research studies should be seriously considered. Most importantly, datasets for emotion and affect tracking should not only contain faces or isolated specific characters, but contextual factors such as background visual scene information, and interactions between characters should also be included.

In order to represent the emotional state of humans, numerous studies in Psychology and Neuroscience have proposed methods to quantify humans’ emotional state which include both categorical and continuous models of emotion. The most famous and dominant categorical theory of emotion is the theory of basic emotions which states that certain emotions are universally recognized across cultures (anger, fear, happiness, etc.) and that all emotions differ in their behavioral and physiological response, their appraisal, and in expression [16]. Alternatively, the circumplex model of affect, a continuous model of emotion, proposes that all affective states arise from two neurophysiological systems related to valence and arousal and all emotions can be described by a linear combination of these two dimensions [52, 47, 53]. Another model of emotion recognition, the Facial Action Coding System model, states that all facial expressions can be broken down into the core components of muscle movements called Action Units [17]. Previous emotion recognition models have been built with these different models in mind [61, 63, 41]. However, few models focus on measuring affect using continuous dimensions, an unfortunate product of the dearth of annotated databases available for affective computing.

Based on the aforementioned emotion metrics, many emotion recognition datasets have been developed. Early datasets, such as SAL [15], SEMAINE [39], Belfast induced [58], DEAP [28], and MAHNOB-HCI [59] are collected under highly controlled lab settings and are usually small in data size. These previous datasets lack diversity in terms of characters, motions, scene illumination, and backgrounds. Moreover, the representations in early datasets are usually discrete. Recent datasets, like RECOLA [49], MELD [46], OMG-emotion dataset [5], Aff-Wild [69], and Aff-Wild2 [29, 30], start to collect emotional states via continuous ratings and utilize videos on the internet or called ”in-the-wild”. However, these datasets lack contextual information and focus solely on facial expressions. The frames are dominated by characters or particular faces. Furthermore, the aforementioned datasets have limited annotators (usually less than 10). As human observers have strong individual differences and suffer from many biases [12, 45, 48], limited annotators can lead to substantial annotation biases.

In this study, we introduce the Video-based Emotion and Affect Tracking in Context Dataset (VEATIC, /ve"ætIc/), a large dataset that can be beneficial to both Psychology and computer vision groups. The dataset includes 124 video clips from Hollywood movies, documentaries, and home videos with continuous valence and arousal ratings of each frame via real-time annotation. We also recruited a large number of participants to annotate the data. Based on this dataset, we propose a new computer vision task, i.e., automatically inferring the affect of the selected character via both context and character information in each video frame. In this study, we also provide a simple solution to this task. Experiments show the effectiveness of the method as well as the benefits of the proposed VEATIC dataset. In a nutshell, the main contributions of this work are:

• We build the first large video dataset, VEATIC, for emotion and affect tracking that contains both facial features and contextual factors. The dataset has continuous valence and arousal ratings for each frame.

• In order to alleviate the biases from annotators, we recruited a large set of annotators (192 in total) to annotate the dataset compared to previous datasets (usually less than 10).

• We provide a baseline model to predict the arousal and valence of the selected character from each frame using both character information and contextual factors.

This paper is available on arxiv under CC 4.0 license.